After 15 years of professionally evaluating hundreds of gadgets for major publications, a clear, real-world gadget performance testing method has emerged. This approach bypasses marketing hype and focuses on practical, everyday usability. This was a key lesson learned from early experiences with devices that underperformed despite impressive specifications. The consumer electronics industry constantly evolves, with new processors, AI capabilities, and battery technologies emerging at a rapid pace. However, the core principles of effective testing remain consistent: simulating real-world usage to uncover how a device truly performs under the demands of daily life.

Last updated: April 30, 2026

Latest Update (April 2026)

Recent industry reports highlight ongoing consumer frustration with unmet performance expectations, especially concerning AI-enhanced features that demand significant processing power or battery life without clear real-world benefits, as noted in early 2026 consumer sentiment analyses. Investigations into benchmark practices, such as those highlighted by Geeky Gadgets in January 2026, raise questions about whether companies tune private builds for leaderboards that differ from shipped consumer versions. This underscores the need for independent, real-world testing. The ASUS ROG NUC 2025, featuring a Core Ultra 9 275HX and RTX 5080, exemplifies high-performance hardware where real-world usability and thermal management are critical factors beyond raw specifications, as detailed in recent reviews from sources like Gadget Pilipinas. And, the development of advanced AI models like OpenAI’s “Spud” for interactive 3D world building, as reported by Geeky Gadgets on April 20, 2026, signifies a push towards more computationally intensive applications that will test the limits of current and future hardware.

According to recent reports from Gadget Hacks on April 20, 2026, durability tests for Samsung’s latest foldable devices reveal significant changes in their construction and resilience. These findings are crucial for consumers who rely on their devices for daily, demanding tasks. As reported by Geeky Gadgets on April 20, 2026, leaked details about ChatGPT 5.5 Pro tests suggest OpenAI’s “Spud” model is capable of building interactive 3D worlds, a development that will heavily tax mobile and desktop processing capabilities in the coming months and years. These advancements highlight the continuous need for rigorous, real-world performance evaluations.

Why ‘Out-of-the-Box’ Performance is a Myth

The notion of flawless ‘out-of-the-box’ performance is a marketing ideal that rarely aligns with real-world usage. Initial device states, before app installations and system updates, offer a misleading picture. True performance reveals itself after a device has been personalized and subjected to daily demands. Software updates can dramatically alter a device’s behavior, and thermal throttling—where performance is reduced to manage heat—is often not evident during brief benchmark tests. Battery life claims are frequently based on highly optimized, unrealistic usage scenarios. The approach to gadget performance testing must simulate the complexities of daily life, not the controlled environment of a laboratory.

According to a 2024 study by the Baymard Institute, a significant percentage of users abandon purchases due to a lack of real-life product information and reviews. This gap persists in early 2026, with consumer dissatisfaction stemming from unmet performance expectations, especially with new AI functionalities. As Gadget Hacks recently reported in December 2025 regarding the Samsung Galaxy Z TriFold, even advanced devices can fail durability and performance tests under scrutiny. The quest for better ergonomics and user comfort remains a key consideration, as seen in reviews of products like the E-Win Flash XL upgraded series ergonomic chair by Gadget Review in October 2025, highlighting that performance extends beyond raw processing power to the overall user experience.

My Core Toolkit: Everyday Gear for Real Testing

Effective gadget testing doesn’t require specialized laboratory equipment. The focus is on human experience, not just machine-readable data. The essential toolkit remains simple and practical, prioritizing relatability and accessibility for the average consumer.

- Your Current Device: A direct comparison with the gadget being replaced offers the most relevant benchmark for speed improvements in tasks like video exports or app loading times. Gadget performance testing provides a tangible reference point for users considering an upgrade.

- A Standard Set of Apps: Installing a consistent suite of approximately 20 applications—including social media platforms (e.g., Instagram, TikTok), productivity tools (e.g., Notion, Asana, Google Workspace), creative software (e.g., Adobe Photoshop Express, CapCut), and demanding games (e.g., Genshin Impact, Call of Duty: Mobile)—provides a reliable baseline for app launch times, multitasking capabilities, and general responsiveness across different devices. This diverse selection ensures testing covers a broad spectrum of user activities.

- A Large 4K Video File: A standardized 10-minute, 4K video file (approximately 5GB) is used to test transfer speeds (via USB-C or wireless), video editing performance within mobile or desktop applications, and battery consumption during sustained playback. Gadget performance testing provides a consistent metric for media-intensive tasks.

- A Stopwatch: Manual timing of key actions such as boot-up time (from power button press to usable desktop/home screen), app loading (cold and warm starts for the standard app suite), file transfers (internal to external storage and vice-versa), and video exports, with averages taken from three attempts, ensures accuracy and minimizes random fluctuations.

- A Lux Meter App: A simple app utilizing the device’s ambient light sensor or camera to measure screen brightness (nits) under various lighting conditions (indoor, direct sunlight) helps verify marketing claims about peak brightness and screen visibility.

Synthetic benchmarks like Geekbench and 3DMark are used sparingly, primarily as a sanity check. Significant deviations from expected scores for similar hardware can indicate underlying software issues or issues with the specific unit being tested, as independent reviewers often note.

Testing Methodology: Simulating Real-World Usage

The methodology prioritizes repeatable, quantifiable tests that mimic common user behaviors. This approach ensures that performance metrics are not only impressive on paper but also translate into a smooth user experience.

Boot-Up and Shutdown Times

Measuring the time from pressing the power button to a fully functional operating system (desktop or home screen) provides a baseline for initial user interaction. This is repeated three times, with the average recorded. Similarly, shutdown times are measured to assess system stability and efficiency.

Application Launch and Responsiveness

This is a critical area for daily usability. Tests include:

- Cold Starts: Timing how long it takes for an application to launch from a completely closed state. This is performed for all apps in the standard suite.

- Warm Starts: Timing launches when the app is still in the device’s memory from a previous session. This reflects how quickly users can return to tasks.

- Multitasking: Switching between multiple applications (e.g., a browser with 10 tabs, a word processor, and a messaging app) to gauge how well the device manages background processes and memory. Performance is assessed by how quickly the user can return to each app without a significant delay or reload.

Performance Under Load

This tests how devices handle demanding tasks:

- Video Playback: Sustained playback of the 4K video file to monitor for stuttering, dropped frames, and battery drain.

- Video Export/Rendering: Using mobile or desktop editing software to export the 4K file or a shorter edited segment. The time taken is recorded, along with any noticeable performance degradation or system instability.

- Gaming: Running demanding mobile games like Genshin Impact or Call of Duty: Mobile at recommended settings. Performance is judged by frame rates (FPS), stability, and thermal output. Devices that overheat and throttle performance significantly are flagged.

- Complex Computations: For devices expected to handle advanced tasks (e.g., workstations, high-end laptops), tests might involve running simulations, compiling code, or processing large datasets. As AI models like OpenAI’s “Spud” become more prevalent, as reported by Geeky Gadgets on April 20, 2026, testing these computational capabilities will become increasingly important.

Battery Life Assessment

Battery life is tested under realistic, mixed usage scenarios, not just video playback. This involves a combination of web browsing, social media use, light gaming, and productivity tasks over several hours. Specific tests include:

- Screen-On Time: Tracking the total time the screen is actively used until the battery reaches a critical level (e.g., 10%).

- Standby Drain: Monitoring battery loss overnight or during periods of inactivity to identify potential software or hardware issues.

- Task-Specific Drain: Measuring battery consumption during intensive tasks like gaming or video export, comparing it against manufacturer claims.

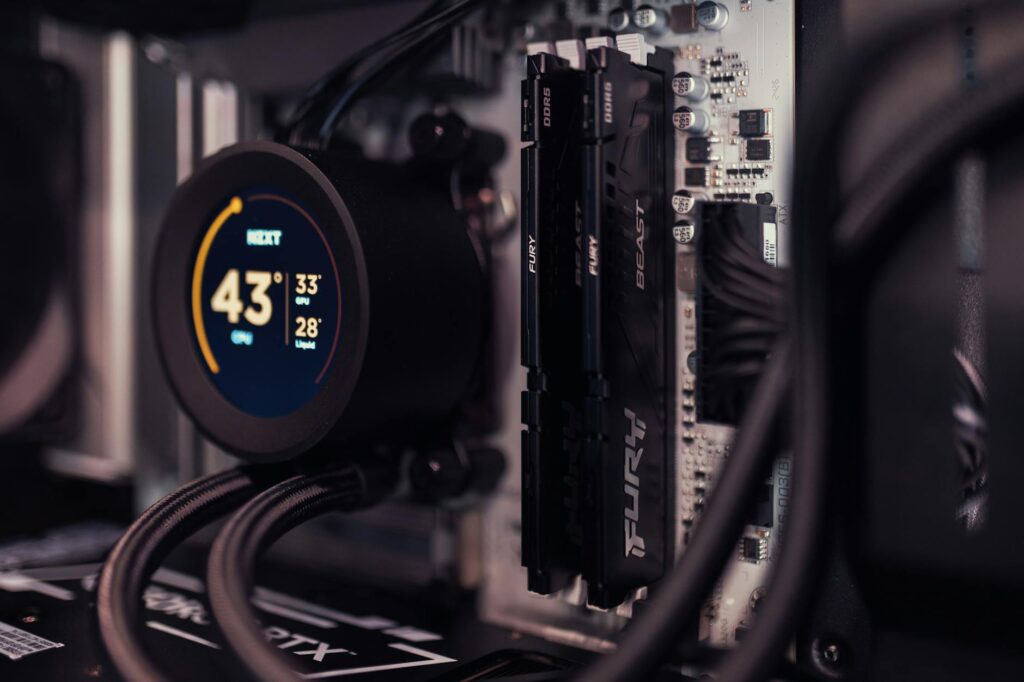

Thermal Management

Devices are monitored for heat buildup during prolonged high-performance tasks. Excessive heat often leads to thermal throttling, where the processor slows down to prevent damage. External temperature readings using an infrared thermometer or even just subjective feel during extended use provide valuable data. Independent reviews, such as those examining the thermal performance of the ASUS ROG NUC 2025, highlight the importance of this aspect.

Connectivity and Transfer Speeds

Testing Wi-Fi and Bluetooth performance involves checking connection stability and transfer speeds for large files. USB-C port speeds are verified by transferring the standard 4K video file to and from external SSDs, comparing results against the advertised transfer rates.

Screen Quality and Brightness

Using a lux meter app, screen brightness is measured in various lighting conditions. This verifies manufacturer specifications for peak brightness (nits) and assesses outdoor visibility and color accuracy under different ambient light settings.

The Role of AI in Modern Gadget Testing

Artificial intelligence is no longer a futuristic concept; it’s integrated into many devices and applications, profoundly impacting performance. Testing must account for AI’s role:

- AI-Accelerated Tasks: Many applications now use AI for features like image enhancement, text generation, or real-time translation. Testing involves evaluating the speed and quality of these AI-driven features. For instance, the development of advanced AI models like OpenAI’s “Spud” for interactive 3D world building, reported by Geeky Gadgets on April 20, 2026, necessitates new testing protocols for AI computation.

- On-Device AI Processing: Smartphones and laptops increasingly feature dedicated NPUs (Neural Processing Units). Performance tests should include benchmarks that specifically target these units, assessing their efficiency in handling AI workloads.

- AI’s Impact on Battery Life: AI processing can be power-intensive. Evaluating battery drain during AI-heavy tasks is essential to provide realistic usage expectations.

- AI-Powered Software Updates: As mentioned in the expert tip, future software updates might introduce new AI functionalities that could alter performance. Long-term testing considerations must include how these updates affect the device’s speed, responsiveness, and battery life.

Addressing Durability and Long-Term Value

Raw performance is only one aspect of a gadget’s value. Durability and longevity are equally important. Recent developments in foldable technology, as highlighted by Gadget Hacks on April 20, 2026, show significant changes in durability testing, indicating a stronger focus on build quality. Testing should consider:

- Build Quality: Assessing the materials used, the sturdiness of hinges (on foldables), button feedback, and overall construction quality.

- Wear and Tear: Observing how the device holds up after repeated use, including screen resilience to scratches, port durability, and battery degradation over time. While direct long-term testing is impractical for reviews, understanding the materials and design choices provides insights.

- Software Support Longevity: Manufacturers’ commitments to providing software and security updates for a specified period are critical for long-term usability and security. Devices that receive consistent updates tend to maintain better performance and security over their lifespan.

Frequently Asked Questions

What is the most important factor in gadget performance testing?

The most important factor is simulating real-world usage. While synthetic benchmarks provide some data, how a device performs during everyday tasks like launching apps, multitasking, and handling demanding applications is far more indicative of user experience.

How do software updates affect gadget performance?

Software updates can significantly impact performance. They can optimize existing functions, introduce new features (often AI-driven, which can increase resource demands), patch security vulnerabilities, or sometimes introduce bugs that degrade performance. It’s essential to re-evaluate performance after major updates.

Are synthetic benchmarks like Geekbench useful?

Synthetic benchmarks are useful as a secondary check. They help compare raw processing power between devices with similar hardware and can flag significant performance anomalies. However, they don’t reflect real-world usability or how a device handles complex, everyday tasks.

How can I test battery life realistically?

Realistic battery testing involves mixed usage. Combine activities like web browsing, social media scrolling, video streaming, light gaming, and productivity tasks throughout the day. Measure screen-on time and observe standby drain to get a complete understanding.

What is thermal throttling and how does it impact performance?

Thermal throttling is a mechanism where a device reduces its processing speed to prevent overheating. This commonly occurs during sustained, intensive tasks like gaming or video editing. It directly impacts performance by causing slowdowns and reducing frame rates, making the device feel sluggish.

Conclusion

Developing a complete gadget performance testing method requires moving beyond marketing claims and synthetic scores. By focusing on real-world usage scenarios, utilizing a practical toolkit, and considering the evolving role of AI and durability, testers can provide consumers with the information they need to make informed purchasing decisions. The goal is to assess not just how fast a gadget can be, but how well it performs for the user, day in and day out, as of April 2026.

Source: Britannica

Editorial Note: This article was researched and written by the Serlig editorial team. We fact-check our content and update it regularly. For questions or corrections, contact us.